From Data to Living Models

There is something oddly limiting about the way we describe modern data systems. We draw boxes, connect them with arrows, and call the result a pipeline. But real systems — especially those shaped by streaming data, orchestration, and machine learning — do not behave like rigid pipelines. They evolve.

Systems are not just pipelines. They are living flows.

Ingestion — The Beginning

Every system begins with raw signals: events, logs, APIs, and real-time streams. This is the vineyard stage — messy, continuous, and full of potential.

In practice, this layer captures motion before meaning: fast inputs from the outside world, arriving before they have been shaped into anything durable or useful.

Transformation — Shaping the Flow

With Apache Beam and Dataflow, raw events are cleaned, structured, enriched, and prepared for downstream use. This is the point at which movement begins to take form.

Not unlike fermentation, transformation is less about imposing order and more about guiding raw material toward something expressive, reliable, and ready to mature.

The Data Lake — A Living State

At the center sits the Data Lake, built on GCS and BigQuery. It functions as a storage layer, a transformation base, and a durable foundation for analytics and model development.

The lake is not where data stops. It is where data learns how to persist.

Here, data accumulates context, structure, and history. It becomes queryable, reusable, and eventually ready to support features, training, and production inference.

Features and Training — Meaning Emerges

As data matures, it becomes features. With Vertex AI, models can learn from historical patterns and move from static records toward adaptive intelligence.

This layer is where the system begins to form judgment: selecting signals, learning patterns, and turning accumulated history into future-facing behavior.

Serving and Monitoring — Keeping Systems Alive

Intelligence only matters when it can be served reliably. Endpoints, APIs, observability, and monitoring keep the system responsive after deployment.

A living model is not simply trained and released. It is observed, measured, rate-limited, validated, and continuously interpreted over time.

Architecture

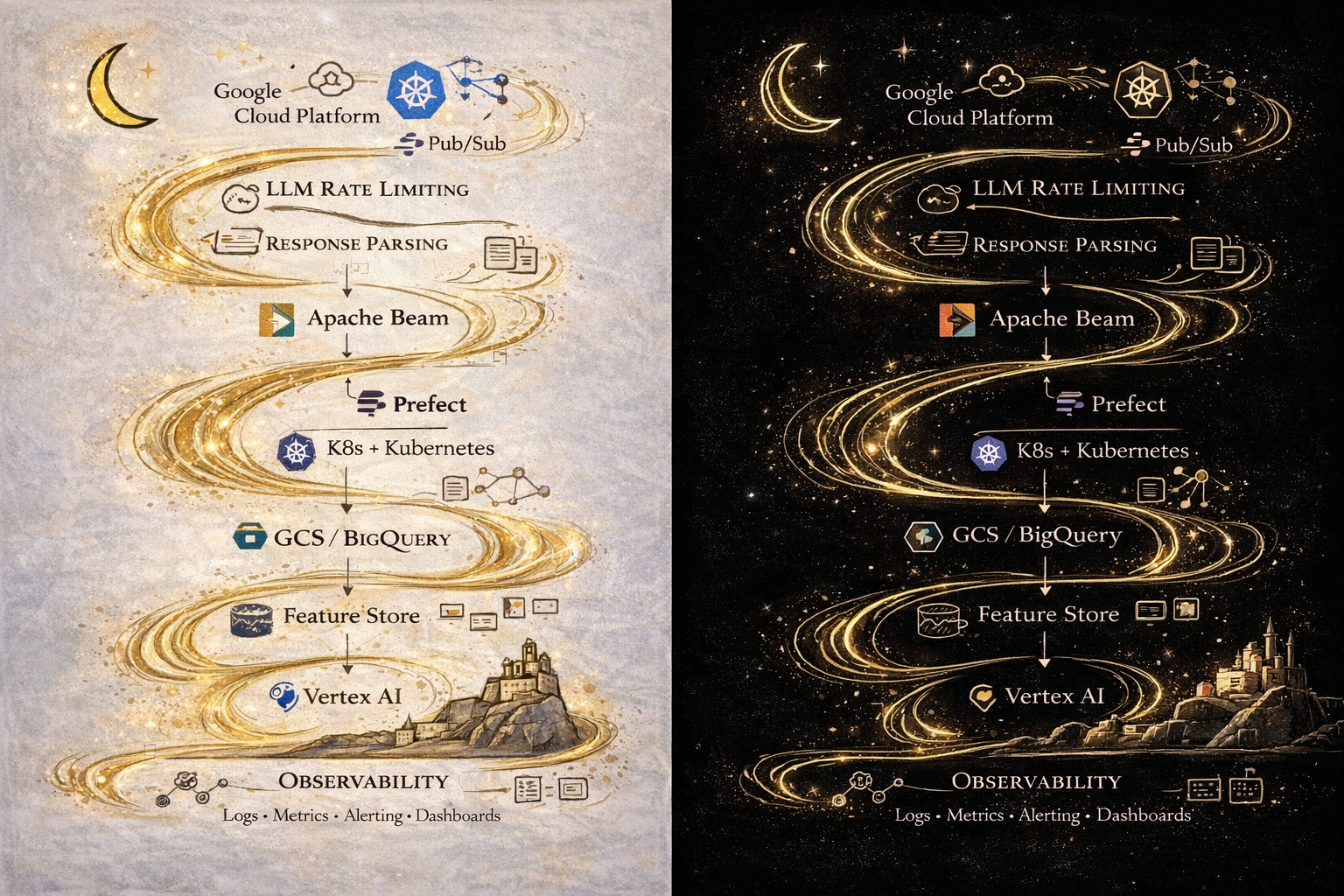

The architecture below is designed as a flowing structure rather than a rigid block diagram. It moves from ingestion to transformation, through storage and orchestration, and onward to training, serving, LLM operations, and observability.

Final Thought

We often say that we build pipelines. In reality, what we build are systems that learn, evolve, and respond under changing conditions.

From data to living models.