Imagine two everyday situations.

First, you ask someone for help. If the person hesitates and begins their response with “but…”, you might immediately guess that there is a high chance they will refuse. Your mind is updating its expectation based on new evidence.

Second, imagine a colleague who comes to work every day suddenly misses work. Without knowing the reason, you might infer that they are sick or dealing with a personal issue at home.

Both situations reflect a common pattern in human reasoning: we update our beliefs when new information becomes available.

This is exactly the idea behind Bayes’ theorem.

In simple terms, Bayes’ theorem describes how prior beliefs can be updated when new evidence appears. Our initial assumptions may not perfectly reflect reality, but by incorporating additional information, we can refine our predictions and reduce uncertainty.

Everyday Examples of Bayesian Thinking

Example 1: Weather Forecast

Suppose the weather forecast predicts a 20% chance of rain on Wednesday and 30% on Thursday. Most people would probably decide not to bring an umbrella.

However, imagine that it actually rains on Wednesday.

From past experience, you know that when it rains one day, the probability of rain the following day may increase significantly — perhaps to around 80%. After observing Wednesday’s rain, your estimate for Thursday’s rain becomes higher than your original forecast.

As a result, you decide to bring an umbrella the next day.

Your belief about Thursday’s weather has been updated using new evidence.

Example 2: Finding an Intersection

Imagine driving on a straight road where intersections are rare.

Based on your knowledge, the probability of encountering an intersection at any moment might be only 5%, meaning there is a 95% chance you would guess incorrectly if you tried to turn randomly.

Now suppose you notice that the car behind you turns on its right signal.

Based on typical driving behavior, there might be:

- a 90% chance that the driver is preparing to turn at an intersection

- a 10% chance that they are simply parking on the roadside

This additional information significantly changes your belief about whether an intersection is nearby. Following the signal becomes a much safer decision than guessing blindly.

Understanding Bayes’ Theorem

Bayes’ theorem describes the probability of an event based on prior knowledge of related events.

Mathematically, it is expressed as:

Where:

-

**P(A B)** – probability of event A given that B has occurred -

**P(B A)** – probability of event B given A - P(A) – prior probability of A

- P(B) – probability of observing B

In Bayesian reasoning, we combine prior beliefs with observed evidence to produce a posterior probability.

A Medical Diagnosis Example

Consider a medical test used to detect a rare disease such as cancer.

Assume the following:

- Only 0.8% of the population has the disease

- The test correctly detects cancer 98% of the time when the patient actually has cancer

- The test correctly returns a negative result 97% of the time for healthy patients

This gives us:

- P(cancer) = 0.008

- P(non-cancer) = 0.992

-

P(positive cancer) = 0.98 -

P(negative non-cancer) = 0.97

Now suppose a patient receives a positive test result. What is the probability that the patient actually has cancer?

Using Bayes’ theorem:

[ P(cancer | positive) = \frac{P(positive | cancer)P(cancer)} {P(positive | cancer)P(cancer) + P(positive | non-cancer)P(non-cancer)} ]

[ = \frac{0.98 \times 0.008}{0.98 \times 0.008 + 0.03 \times 0.992} \approx 20.85\% ]

Surprisingly, even with a positive test result, the probability that the patient actually has cancer is only about 21%.

Why?

Because the disease itself is very rare in the population.

Even a highly accurate test can produce many false positives when applied to a large number of healthy people.

Why This Happens

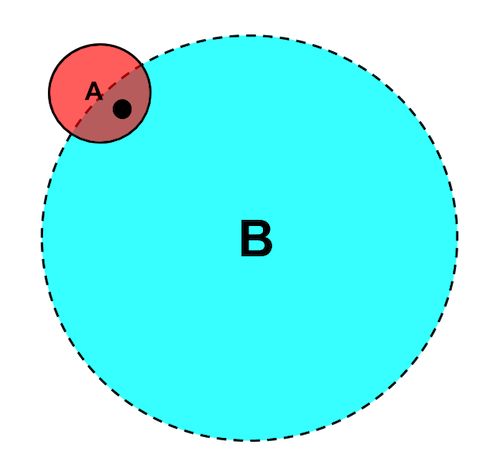

A helpful way to understand this is through visualization.

When the base probability of an event is extremely small, even strong evidence may not drastically increase the final probability.

Although the test is very accurate, the population of healthy individuals is so large that false positives accumulate quickly.

This phenomenon is known as the base rate effect.

Why Bayes’ Theorem Matters

Bayes’ theorem teaches us an important lesson:

Probabilities should not be interpreted in isolation — they must be evaluated in context.

New evidence can significantly alter our predictions, and sometimes the result can be quite counterintuitive.

This principle is widely applied in fields such as:

- medical diagnosis

- machine learning

- fraud detection

- marketing analytics

- stock prediction

- image recognition

Bayesian methods also play a critical role in modern AI and probabilistic machine learning, where models continuously update predictions as new data arrives.

Final Thoughts

Bayesian thinking mirrors how humans naturally reason about uncertainty.

We begin with assumptions, observe new evidence, and adjust our beliefs accordingly.

Sometimes high-probability events become unlikely when additional context is considered, while seemingly improbable events become more plausible.

In this way, Bayes’ theorem reminds us that understanding uncertainty requires both theory and evidence — and the curiosity to question unexpected results.